OJK AI Governance: 3 Critical Architecture Decisions Builders Miss

14 March, 2026

Most Indonesian bank AI projects treat OJK AI governance as the final step. The teams that reach production treat it as the first constraint. The difference comes down to three architecture decisions made early — or not made at all.

In every Indonesian banking AI engagement that reaches production, one pattern holds: governance comes first. Not as documentation to be filed after the system is built — but as the input that shapes the architecture from the start. Specifically, that means mapping which OJK regulations govern the use case, what the explainability requirement looks like in practice, where the data actually lives, and what a compliance examiner would need to see to sign off on a production system.

This order of operations sounds obvious. In practice, it is the exception. RSM Indonesia’s 2025 AI governance review found that while enthusiasm for AI in Indonesian banking is high, governance maturity is lagging — with inadequate infrastructure, lack of model explainability, poor data quality, and weak system integration identified as the four dominant failure dimensions. These are architecture problems, not attitude problems. They are the direct result of building the system first and asking the compliance question second.

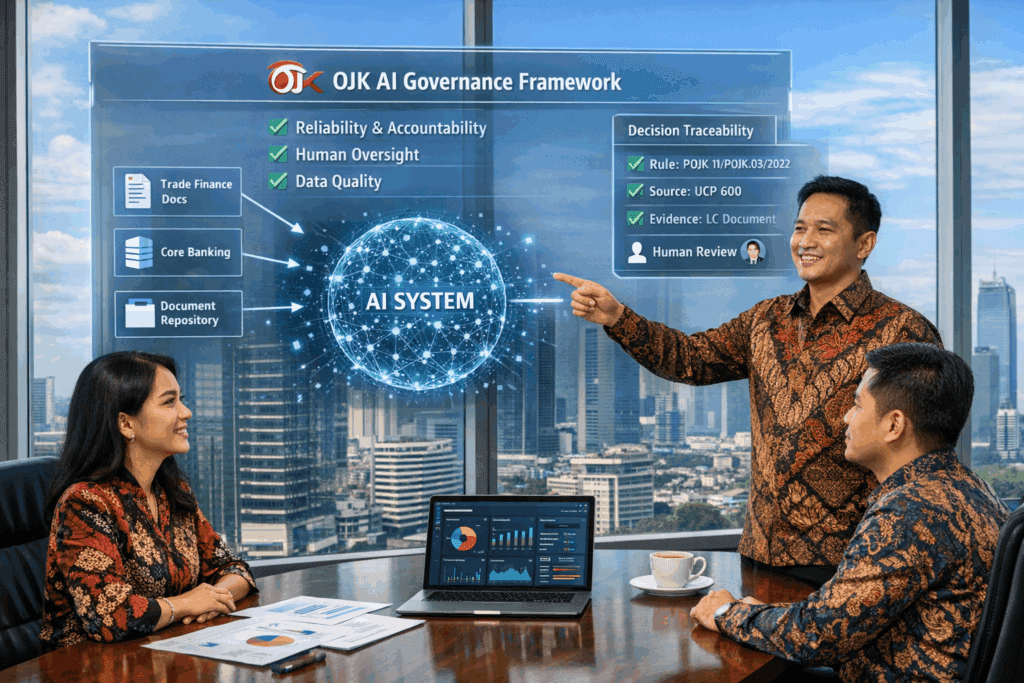

Here is what Redpumpkin.AI learned building Agentic AI into production for an Indonesian bank — and specifically, how the OJK AI Governance Framework forced three architecture decisions that most vendor demos never touch.

What does OJK’s AI governance framework actually require in practice?

OJK launched the Artificial Intelligence Governance for Indonesian Banking guidebook in April 2025, positioning it as the minimum benchmark for the entire banking sector. It draws on the Basel Committee on Banking Supervision, the EU AI Act, and US OCC guidance — meaning the requirements are internationally converged, not locally idiosyncratic.

The framework has three core requirements that matter for builders:

Reliability and accountability. AI decisions must align with the bank’s strategy and risk appetite. Every decision must be fully traceable — not just to the model output, but to the input data and the rules applied. Accountability cannot be delegated to the vendor.

Human oversight. Human supervision is essential for building trust in AI systems. The framework is explicit that AI must support human decision-making, not replace the accountability chain. This is a hard governance requirement that defines what “production-ready” means in Indonesian banking — and it must be reflected in how the system is designed, not just described in documentation.

Data quality and resilience. OJK’s December 2024 update to its AI ethics code strengthened requirements around model and data reliability, data protection, and cyber resilience. The system must remain compliant as data and rules evolve — which has direct implications for how the AI is architectured to handle rule changes.

Why this matters to builders

These three requirements must be present in the code from sprint one — explainability, human override capability, and resilient data connectivity are systems properties, which means they must be designed in rather than retrofitted from the compliance team’s comments after the system is built.

What are the three architecture decisions OJK forces from the start?

When Redpumpkin.AI built Agentic AI into a major Indonesian bank’s trade finance workflow, three architecture decisions were forced directly by the OJK framework — not by preference, and not by vendor default. The same three requirements appear consistently across OJK’s published governance standards for document-intensive, compliance-sensitive banking use cases. They cannot be deferred, and the project record below shows why.

| OJK Requirement |

Wrong Approach |

Forced Architecture Decision |

|---|---|---|

| Accountability & traceability |

Black-box model output with post-hoc explanation |

Evidence-first reasoning: every conclusion cites the exact source document, field, and rule that triggered it — before the output is generated |

| Human oversight |

Human review step bolted on at the end of the pipeline |

Human-in-the-loop designed as a first-class system component: routing logic, override logging, feedback capture, and reviewer audit trail built in from sprint one |

| Data quality & resilience |

ETL (Extract, Transform, Load) pipeline that centralises data into a new store |

Zero-migration source connectivity: the system reads data at its origin — core banking, document repositories, trade portals — so data governance stays with the bank and the system does not create a new compliance surface |

Each of these decisions adds complexity upfront. Each one prevents a category of failure that would otherwise appear in production — and in some cases, in an OJK examination.

Why is data fragmentation the hardest OJK compliance problem to solve?

The OJK requirement for data quality sounds straightforward. In practice, Indonesian bank data is structurally fragmented in ways that most AI vendors underestimate before the engagement starts and quietly blame on the client after it stalls.

Oradian’s analysis of Indonesian digital banking identifies three structural data factors specific to Indonesia: first, customer and transaction data sits across origination systems, core banking, digital channels, and third-party providers with inconsistent formatting and weak linkage; second, OJK’s explainability requirements mean off-the-shelf models do not meet the governance standard without significant adaptation; and third, Indonesia’s geographic heterogeneity means data patterns that hold in Jakarta do not necessarily hold in East Java, Sulawesi, or Kalimantan.

For a trade finance AI system, this fragmentation takes a specific form. Bank staff are verifying letters of credit against invoices, bills of lading, and internal compliance documents. These documents do not live in one system. They arrive in different formats — PDFs, scanned images, email attachments, physical paper that has been digitised with varying quality. The data does not become clean when it enters the bank’s systems. It arrives fragmented and stays that way.

The wrong response is to solve this with ETL (Extract, Transform, Load — the process of pulling data from multiple sources, standardising it, and loading it into a centralised store): centralise the data, normalise it, and then run the AI against the unified store. This approach fails OJK’s data residency and governance requirements, because the ETL process creates a new data copy that now sits outside the bank’s existing governance controls. It also fails in production because the ETL pipeline must be maintained as source systems change — which they do, constantly, in an active banking environment.

The correct architecture is source connectivity: the AI connects to each data origin directly, works with the data in place, and does not create a copy. This keeps the bank’s existing data governance intact and keeps Redpumpkin.AI out of the data custodianship role — which is where it should be. The bank owns the data. The AI reads it.

How should an Indonesian bank handle OJK’s evolving regulatory framework in an AI system?

OJK’s AI governance framework is explicitly described as a minimum benchmark that must be complemented by ongoing regulatory updates. The framework references multiple evolving standards: POJK 11/POJK.03/2022 on IT implementation, SEOJK 29/SEOJK.03/2022 on cyber defence, and the Digital Resilience Guideline — each with its own update cycle. Beyond OJK, trade finance rules like ICC UCP 600 are reinterpreted and applied differently over time, and internal bank policy thresholds change with risk appetite and management direction.

This creates a specific problem for AI systems built with fine-tuned or statically trained models: rule changes make the model’s embedded knowledge stale, and stale knowledge in a regulated system is a compliance liability, not just an accuracy issue. A model that returns an outdated compliance answer because its training predates a new OJK circular does not fail visibly. It produces confident output grounded in superseded rules. An examiner reviewing the audit trail will find the failure; the bank will find it later.

The core requirement is change-resilient grounding: the AI system must incorporate updated rules without a full model retrain, and every conclusion must be traceable to the version of the rule that was current at decision time.

One architecture pattern that addresses this well is RAG (Retrieval-Augmented Generation). Instead of relying on knowledge encoded during training, a RAG-based system retrieves the current governing document first — the relevant OJK regulation, the bank’s internal policy threshold, the UCP 600 clause at issue — before generating any conclusion. The retrieved source is then logged as part of the audit trail, making the evidence chain inspectable after the fact.

Other technical approaches exist, but the underlying requirement is the same regardless of implementation: the system must show what it relied on, and what it relied on must reflect current rules. How you achieve that is an architecture decision. That you achieve it is an OJK requirement.

What a compliance team actually needs to see

An OJK examiner reviewing a trade finance decision asks: “What rule did you apply, and what was your source?” A system grounded in retrieved evidence answers with a citation: the specific document, version, clause, and extraction date. A system relying solely on a fine-tuned model’s internal weights answers with a confidence score. In a regulated environment, these are not equivalent answers — and which one you can produce is determined by architecture decisions made early in the build.

What is the build sequence that actually gets Agentic AI to production?

The sequence that reaches production consistently has the OJK governance framework as its first input — not its final checklist. The steps below apply specifically to document-intensive use cases like trade finance, where data fragmentation and auditability requirements are highest.

1

Governance scoping before architecture scoping

Identify which OJK regulations govern the use case. Map the explainability, data residency, and human oversight requirements before writing a single line of architecture. Done early, this scoping prevents the most expensive category of rework — discovering a governance gap after the system is already built.

2

Data connectivity audit

Map every data source the AI will need to read: which systems, what formats, what access controls exist, what data does not currently exist in structured form. The audit reveals the real scope of the project. Most vendors skip this and discover the problem during implementation.

3

Design the human review loop before the AI pipeline

Define what the human reviewer sees, what they can override, how their decision is logged, and how that log feeds back into the system. This is the accountability chain OJK requires. It must exist in the design before the AI pipeline is built — not after.

4

Build the evidence chain first

Every output the AI produces must be traceable to a source document, field, and rule. Build the evidence chain architecture — how sources are indexed, how citations are generated, how audit logs are structured — before building the reasoning pipeline. The reasoning pipeline must be designed to produce this chain, not bolted onto a system that already exists.

5

Production handover as a designed output

The bank’s team must be able to operate the system without the vendor. This means full documentation of the architecture decisions, the governance mapping, the knowledge base update process, and the human review logic. Under OJK’s resilience requirements, vendor dependency is a governance risk — a bank that cannot operate or audit its own AI system in the absence of the vendor has a structural compliance gap.

What did this architecture produce in the trade finance deployment?

When Redpumpkin.AI applied this sequence to a major Indonesian bank’s trade finance verification workflow, the outcome was not just a faster system. It was a system that the bank’s compliance team could stand behind in an examination — because the audit trail was built into the architecture from day one, not written into a report afterward.

The operational results are documented in the full case study: document verification time reduced from 4 days to 15 minutes, anomaly detection accuracy reaching 98%, and OJK Digital Resilience standards met with a fully human-auditable reasoning chain. The compliance outcomes mattered as much as the speed outcomes. A system that is fast but not auditable cannot be deployed in a regulated Indonesian bank. This one was both.

For Indonesian banks looking to move beyond the pilot stage, the implication is direct: the OJK compliance framework is not a constraint to satisfy after the system is built. It is the specification the system must be built to from the start. Banks that treat OJK governance as a final review step end up in pilot. Banks that treat it as a design input end up in production.

Benchmark your data readiness.

We’re compiling our findings from the field into the Indonesia AI Reality Check for BFSI. This report outlines the specific technical hurdles we encountered and the operational benchmarks we achieved. Click the link below to get the report once it’s ready.

FAQ

What does OJK’s AI Governance framework actually require from Indonesian banks?

OJK’s AI Governance for Indonesian Banking (launched April 2025) sets three core requirements: reliability and accountability — AI decisions must be fully traceable; human oversight — humans must be able to review, override, and log any decision; and data quality and resilience — the system must remain compliant as rules and data evolve. OJK treats these as minimum benchmarks for the entire banking sector.

Why do most AI pilots in Indonesian banks fail to reach production?

The most common failure mode is treating OJK compliance as documentation work rather than architecture work. Teams build a capable AI system, then try to retrofit explainability and auditability at the end. OJK requirements cannot be added after the system is built — they must be designed in from sprint one. RSM Indonesia’s 2025 governance review identified this governance maturity gap as the dominant challenge across Indonesian financial institutions.

What is the difference between explainability and auditability in Indonesian banking AI?

Explainability means the system can describe why it reached a conclusion. Auditability means that explanation is traceable, logged, and reviewable by a third party — including OJK examiners. Explainability is a model property. Auditability is a systems property. OJK’s framework requires the latter. A system that explains itself to a user but cannot produce a structured audit trail for a compliance examination does not meet the governance standard.

How does data fragmentation in Indonesian banks affect AI architecture decisions?

Indonesian bank data is typically fragmented across core banking systems, digital channels, document repositories, and third-party providers — with inconsistent formatting and weak linkage between systems. This forces a choice: centralise the data via ETL (Extract, Transform, Load), or connect to each source directly. The latter is more OJK-compliant because it keeps data governance with the bank and does not create a new compliance surface. Redpumpkin.AI builds source connectivity, not data copies.

What is RAG and why is it relevant to OJK compliance in Indonesian banking?

RAG (Retrieval-Augmented Generation) is an architecture pattern where an AI retrieves the current governing rule or document before generating any conclusion, rather than relying on knowledge encoded during training. In OJK-regulated banking, rules change — OJK issues new circulars, banks update internal thresholds, ICC UCP 600 interpretations evolve. A system that retrieves the current rule before generating a conclusion can incorporate those changes without retraining, and its audit trail shows exactly which version of which rule was applied. This makes compliance drift visible rather than silent — which is what OJK’s framework requires when it mandates traceable, auditable decision-making. RAG is one architecture pattern that achieves this; the underlying requirement is that the system can show what it relied on and that what it relied on was current.

What is the biggest hidden cost when an Indonesian bank builds AI without governance-first architecture?

The most expensive hidden cost is rework. When a bank builds an AI system and then brings in the compliance team at the end, the governance gaps they find — missing audit trails, insufficiently documented decision logic, data copies sitting outside the bank’s existing controls — often require redesigning core components of the system rather than adding documentation. In regulated banking, retrofitting auditability is structurally expensive. The code changes, the data architecture changes, and in some cases the deployment environment must change. Starting with governance requirements as a design input removes this cost category entirely.

Should an Indonesian bank start AI in a high-sensitivity or low-sensitivity use case?

Start with use cases that generate real business value, use data you already have in reliable form, have short feedback loops, and carry lower OJK regulatory sensitivity. Trade finance document verification meets all four for most large Indonesian banks. Credit scoring fails on the last two — the feedback loop is slow and any failure is a regulatory event, not just a technical one. Redpumpkin.AI’s standard recommendation is to establish a production-grade system in a lower-sensitivity use case first, then expand the governance model to higher-sensitivity decisions.

Move from ‘What if’ to ‘How.’

Every institution’s infrastructure is unique. We’re happy to share the specific technical lessons we learned during our Indonesian deployments to help you determine if your current data stack is ready for Agentic AI.

About Redpumpkin.ai

At Redpumpkin.AI, we build a GenAI & agentic AI business platform that helps teams adopt generative AI in a way that’s practical, secure, and actually deployable without getting stuck in data complexity, privacy concerns, or painful integration work. Our mission is to make GenAI & agentic ai accessible for both SMEs and large enterprises, so we focus on solutions that are simple to use, scalable in production, and customizable to real business needs, backed by strong security and compliance.